From Prompt to Policy: Building Ethical GenAI Chatbots for Enterprises

I. Introduction: The Double-Edged Sword of GenAI

The improvement of enterprise automation via Generative AI (GenAI) allows digital assistants and chatbots to perceive person intent, to allow them to create appropriate responses and predictive actions. The promising advantages of steady clever interplay at scale create a number of moral challenges that embody biased outputs and misinformation alongside regulatory non-compliance and person mistrust. The deployment of GenAI is not a query of functionality, nevertheless it has developed right into a matter of accountability and applicable implementation strategies. The McKinsey report signifies that greater than half of enterprises have began utilizing GenAI instruments which primarily concentrate on customer support and operational functions. The rising scale of this expertise produces corresponding results on equity requirements and safety measures and compliance necessities. GenAI chatbots have already began remodeling private and non-private interactions via their implementation in banking digital brokers and multilingual authorities helplines.

II. Enterprise-Grade Chatbots: A New Class of Responsibility

Consumer functions often tolerate chatbot errors with out consequence. The dangers in enterprise environments corresponding to finance, healthcare and authorities are a lot better. A flawed output can lead to misinformation, compliance violations, and even authorized penalties. Ethical conduct isn’t only a social obligation; it’s a business-critical crucial. Enterprises want frameworks to be sure that AI programs respect person rights, adjust to rules, and keep public belief.

III. From Prompt to Output: Where Ethics Begins

Every GenAI system begins with a prompt-but what occurs between enter and output is a posh internet of coaching information, mannequin weights, reinforcement logic, and threat mitigation. The moral issues can emerge at any step:

- Ambiguous or culturally biased prompts

- Non-transparent choice paths

- Responses based mostly on outdated or inaccurate information

Without strong filtering and interpretability mechanisms, enterprises might unwittingly deploy programs that reinforce dangerous biases or fabricate data.

IV. Ethical Challenges in GenAI-Powered Chatbots

- The coaching course of utilizing historic information tends to strengthen present social and cultural biases.

- The LLMs produce responses which comprise each factual inaccuracies and fictional content material.

- The unintentional conduct of fashions can lead to the leakage of delicate enterprise or person data.

- The absence of multilingual and cross-cultural understanding in GenAI programs leads to alienation of customers from completely different cultural backgrounds.

- GenAI programs lack built-in moderation programs which allows them to create inappropriate or coercive messages.

- The unverified AI-generated content material spreads false or deceptive information at excessive velocity all through regulated sectors.

- The lack of auditability in these fashions creates difficulties when attempting to establish the supply of a selected output as a result of they operate as black containers.

These challenges seem with completely different ranges of severity and show completely different manifestations based mostly on the precise trade. The healthcare trade faces a vital threat as a result of hallucinated information in retail chatbots would confuse prospects however might lead to deadly penalties.

V. Design Principles for Responsible Chatbot Development

The improvement of moral chatbots requires designers to incorporate values instantly into their design course of past fundamental bug fixing.

- The system contains guardrails and immediate moderation options which prohibit each matters and response tone and scope.

- Human-in-the-Loop: Sensitive choices routed for human verification

- Explainability Modules: Enable transparency into how responses are generated

- The coaching information should embody numerous and consultant examples to forestall one-dimensional studying.

- Audit Logs & Version Control: Ensure traceability of mannequin conduct

- Fairness Frameworks: Tools like IBM’s AI Fairness 360 might help take a look at for unintended bias in NLP outputs

- Real-Time Moderation APIs: Services like OpenAI’s content material filter or Microsoft Azure’s content material security API assist filter unsafe responses earlier than they’re seen by customers

VI. Governance and Policy Integration

All enterprise deployments want to observe each inside organizational insurance policies and exterior regulatory necessities.

- GDPR/CCPA: Data dealing with and person consent

- EU AI Act & Algorithmic Accountability Act: Risk classification, impression evaluation

- Internal AI Ethics Boards: Periodic overview of deployments

- Organizations ought to implement real-time logging and alerting and auditing instruments for steady compliance monitoring.

Organizations ought to assign threat ranges to GenAI programs based mostly on area, viewers and information kind which could be low, medium or excessive threat. AI audit checklists and compliance dashboards assist doc choice trails and scale back legal responsibility.

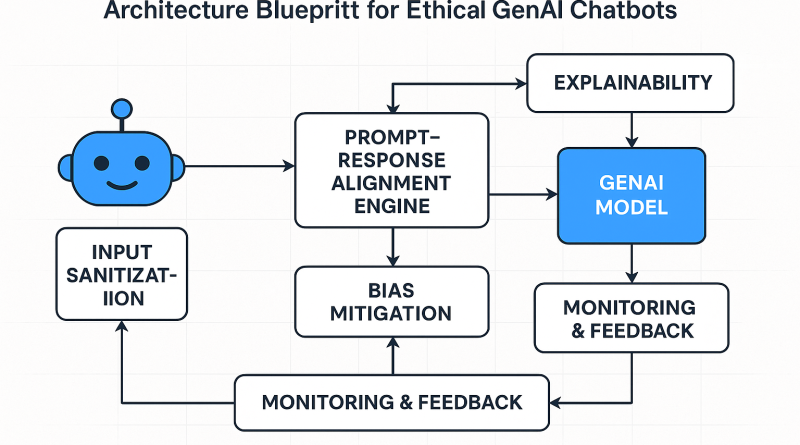

VII. A Blueprint Architecture for Ethical GenAI Chatbots

An moral GenAI chatbot system ought to embody:

- The Input Sanitization Layer identifies offensive or manipulative or ambiguous prompts within the system.

- The Prompt-Response Alignment Engine is accountable for making certain that the responses are per the company tone and moral requirements.

- The Bias Mitigation Layer performs real-time checks on gender, racial, or cultural skew in responses.

- Human Escalation Module: Routes delicate conversations to human brokers

- The system features a Monitoring and Feedback Loop that learns from flagged outputs and retrains the mannequin periodically.

Figure 1: Architecture Blueprint for Ethical GenAI Chatbots (AI-generated for editorial readability)

Example Flow: A person enters a borderline medical question into an insurance coverage chatbot. The sanitization layer flags it for ambiguity, the alignment engine generates a secure response with a disclaimer, and the escalation module sends a transcript to a reside help agent. The monitoring system logs this occasion and feeds it into retraining datasets.

VIII. Real-World Use Cases and Failures

- Microsoft Tay: A chatbot turned corrupted inside 24 hours due to unmoderated interactions

- Meta’s BlenderBot obtained criticism for delivering offensive content material and spreading false data

- Salesforce’s Einstein GPT carried out human overview and compliance modules to help enterprise adoption

These examples show that moral breakdowns exist in actual operational environments. The query is just not about when failures will happen however when they may occur and whether or not organizations have established response mechanisms.

IX. Metrics for Ethical Performance

Enterprises want to set up new measurement standards which surpass accuracy requirements.

- Trust Scores: Based on person suggestions and moderation frequency

- Fairness Metrics: Distributional efficiency throughout demographics

- Transparency Index: How explainable the outputs are

- Safety Violations Count: Instances of inappropriate or escalated outputs

- The analysis of person expertise in opposition to moral enforcement requires evaluation of the retention vs. compliance trade-off.

Real-time enterprise dashboards show these metrics to present instant moral well being snapshots and detect potential intervention factors. Organizations now combine moral metrics into their quarterly efficiency opinions which embody CSAT, NPS and common dealing with time to set up ethics as a major KPI for CX transformation.

X. Future Trends: From Compliance to Ethics-by-Design

The GenAI programs of tomorrow will likely be value-driven by design as a substitute of simply being compliant. Industry expects advances in:

- New age APIs with Embedded Ethics

- Highly managed environments outfitted with Regulatory Sandboxes for testing AI programs

- Sustainability Audits for energy-efficient AI deployment

- Cross-cultural Simulation Engines for international readiness

Large organizations are creating new roles corresponding to AI Ethics Officers and Responsible AI Architects to monitor unintended penalties and oversee coverage alignment.

XI. Conclusion: Building Chatbots Users Can Trust

The way forward for GenAI as a core enterprise software calls for acceptance of its capabilities whereas sustaining moral requirements. Every design aspect of chatbots from prompts to insurance policies wants to show dedication to equity transparency and accountability. Performance doesn’t generate belief as a result of belief exists because the precise consequence. The winners of this period will likely be enterprises which ship accountable options that defend person dignity and privateness and construct enduring belief. The improvement of moral chatbots calls for teamwork between engineers and ethicists and product leaders and authorized advisors. Our potential to create AI programs that profit all folks is determined by working collectively.

Author Bio:

Satya Karteek Gudipati is a Principal Software Engineer based mostly in Dallas, TX, specializing in constructing enterprise grade programs that scale, cloud-native architectures, and multilingual chatbot design. With over 15 years of expertise constructing scalable platforms for international purchasers, he brings deep experience in Generative AI integration, workflow automation, and clever agent orchestration. His work has been featured in IEEE, Springer, and a number of commerce publications. Connect with him on LinkedIn.

References

1. McKinsey & Company. (2023). *The State of AI in 2023*. [Link](https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-in-2023 )

2. IBM AI Fairness 360 Toolkit. (n.d.). [Link](https://aif360.mybluemix.internet/ )

3. EU Artificial Intelligence Act – Proposed Legislation. [Link](https://artificialintelligenceact.eu/ )

4. OpenAI Moderation API Overview. [Link](https://platform.openai.com/docs/guides/moderation )

5. Microsoft Azure Content Safety. [Link](https://be taught.microsoft.com/en-us/azure/ai-services/content-safety/overview )

The submit From Prompt to Policy: Building Ethical GenAI Chatbots for Enterprises appeared first on Datafloq.