Why Data Quality Is the Keystone of Generative AI

As organizations race to undertake generative AI tools-from AI writing assistants to autonomous coding platforms-one often-overlooked variable makes the distinction between game-changing innovation and disastrous missteps: knowledge high quality.

Generative AI doesn’t generate insights from skinny air. It consumes knowledge, learns from it, and produces outcomes that replicate the high quality of what it was skilled on. This article explores the crucial relationship between knowledge high quality and generative AI success-and how companies can guarantee their knowledge is prepared for the AI age.

Understanding Data Quality

Data high quality refers to the situation of a dataset in phrases of its accuracy, completeness, consistency, timeliness, validity, and relevance. It determines whether or not knowledge is match for its meant purpose-whether that’s driving selections, coaching fashions, or fueling buyer experiences.

While usually seen as a backend or IT concern, knowledge high quality is now a strategic precedence. Why? Because in the period of AI, low-quality knowledge can scale errors, introduce bias, and erode trust-faster and extra broadly than ever earlier than.

Key Dimensions of Data Quality

Let’s break down the six most important dimensions:

Accuracy – Does the knowledge appropriately signify real-world entities?

Accurate knowledge ensures AI methods generate significant and reliable outputs. Even small errors can result in large-scale inaccuracies in mannequin outcomes.

Completeness – Are all required knowledge fields current and crammed?

Incomplete data restrict context and cut back the effectiveness of AI coaching. Models depend on complete knowledge to detect patterns and relationships.

Consistency – Is knowledge uniform throughout methods and codecs?

Conflicting knowledge values throughout sources can confuse AI fashions. Consistency helps keep integrity throughout the knowledge pipeline, from ingestion to inference.

Timeliness – Is the knowledge updated and out there when wanted?

Outdated or delayed knowledge can skew AI predictions and restrict real-time functions. Timely updates guarantee selections are made on present and related data.

Validity – Does the knowledge conform to guidelines, codecs, or requirements?

Data that violates anticipated codecs (e.g., incorrect e-mail syntax or invalid dates) can disrupt processing. Validity safeguards mannequin stability and reliability.

Relevance – Is the knowledge helpful for the particular AI software?

Not all knowledge provides value-relevant knowledge ensures the AI is studying from significant enter aligned with its objective.

Each of these dimensions turns into essential in coaching AI fashions which might be anticipated to cause, generate, and work together at a human-like degree.

Understanding Data Quality in Generative AI

Generative AI fashions like GPT, DALLE, or Claude depend on large datasets to be taught language patterns, relationships, and context. When these coaching datasets are flawed, even highly effective fashions can produce skewed, deceptive, or offensive outputs.

Here’s how knowledge high quality impacts generative AI efficiency:

- Bias and Stereotyping: If coaching knowledge accommodates biased language or historic inequalities, the mannequin will reproduce and reinforce them.

- Hallucinations: Incomplete or invalid knowledge could cause AI to “hallucinate”-confidently producing false details.

- Inaccuracy in Outputs: Misinformation in supply knowledge results in misinformation in AI-generated outcomes.

- Regulatory Risk: Poor knowledge dealing with can violate privateness legal guidelines or industry-specific laws.

For companies, this implies poor knowledge high quality doesn’t simply degrade mannequin accuracy-it threatens status, compliance, and buyer belief.

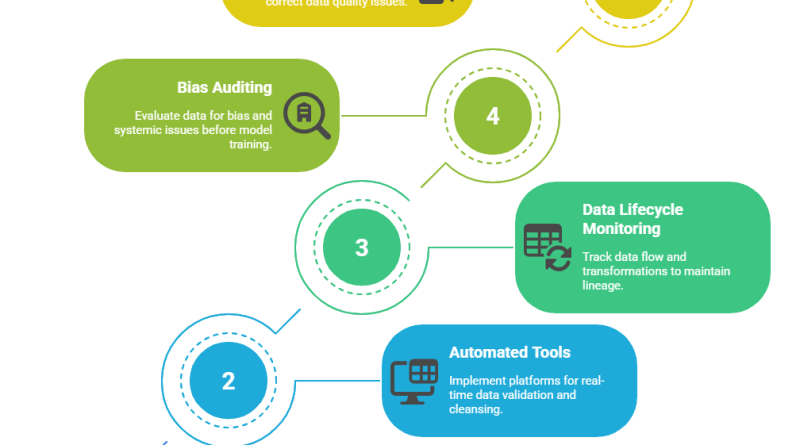

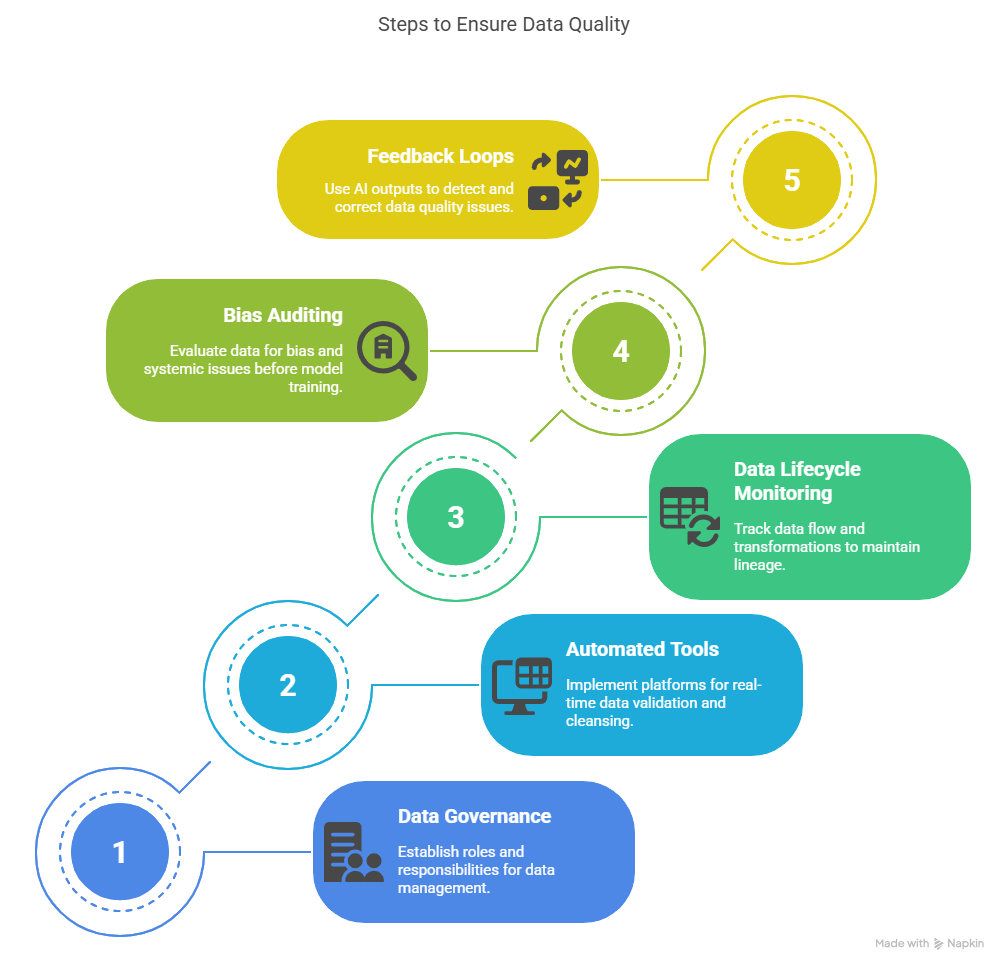

How to Ensure Data Quality?

Achieving excessive knowledge high quality isn’t a one-time repair; it’s a steady effort that includes each expertise and governance. Here are confirmed steps to make sure your knowledge is AI-ready:

1. Establish Data Governance Frameworks

Define roles, obligations, and accountability for knowledge throughout your group. This contains naming knowledge stewards, creating high quality metrics, and imposing knowledge possession.

2. Leverage Automated Data Quality Tools

Use platforms that may validate, clear, standardize, and enrich knowledge in real-time. Tools like Melissa, Talend, and Informatica assist automate large-scale cleaning operations with precision.

3. Monitor Data Lifecycle

Track the place knowledge comes from, the way it’s reworked, and the place it flows. Maintaining lineage ensures you already know the provenance of the knowledge fueling your AI.

4. Bias Auditing and Testing

Before feeding knowledge into fashions, consider it for bias, gaps, or systemic points. Implement equity metrics and conduct adversarial testing throughout mannequin coaching.

5. Feedback Loops

Use AI outputs to detect potential high quality points and alter upstream knowledge sources accordingly. Model habits is a mirrored image of the data-monitor it such as you would buyer suggestions.

Conclusion

As generative AI continues to reshape industries and redefine innovation, one precept stays clear: the high quality of knowledge immediately influences the high quality of outcomes. No matter how highly effective the mannequin, with out clear, correct, and related knowledge, its potential is compromised.

By embedding knowledge high quality into each stage of your AI pipeline-from assortment to deployment-you not solely improve efficiency but additionally construct methods which might be clear, moral, and trusted. In a world pushed by clever automation, investing in knowledge high quality isn’t simply smart-it’s important.

The publish Why Data Quality Is the Keystone of Generative AI appeared first on Datafloq.